There are well over 60 distinct operating systems for commercial use categorised on Wikipedia. Those are merely the noteworthy listings with reputable citations.

We’ve all tasted Windows. Some savour MacOS. The computer-savvy favour various flavours and dressings of GNU/Linux, and by extension, Android. The software memers relish the acquired taste of TempleOS. That leaves us with at least 55 more to sample today.

Extensive research to this day is perpetually pushing performance and standards in operating systems, notably in the study of microkernels. Before we sift the tangled timeline of operating systems, it’s worth examining just what they do in the first place. Afterwards, I will serve tales of sleazy deals that tail significant breakthroughs in the history of computing.

Menu | Operating Systems

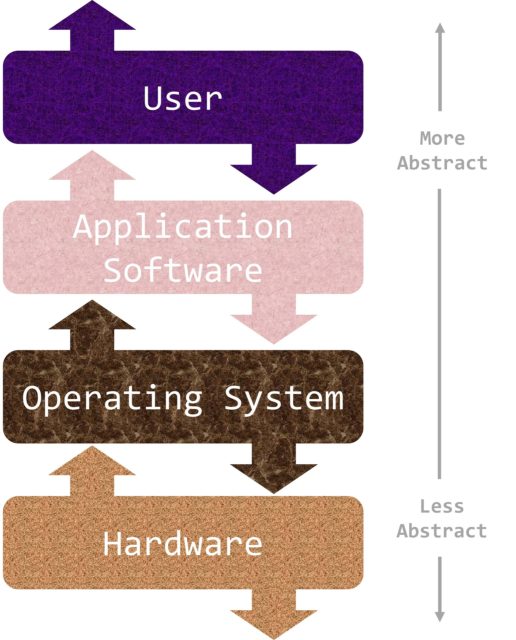

At their core, operating systems allocate resources and manage program execution. Acting as helpful sealants closing the gap between application software and hardware, they are themselves software programs. The key idea being, developers need not understand underlying units – hard disk, RAM, mouse, keyboard – to sensibly write code.

To illustrate this point, consider web development. Backend engineers can remain ignorant of any physical components so long as they conform to the web server’s operating systems. Similarly, frontend engineers can remain platform-agnostic, provided our browsers can compile the web’s language such as Javascript.

Different use cases require different intermediaries aptly named operating systems. For instance, the hardware architecture of my laptop doesn’t scale so well to one found in a typical game console. A supercomputer is a different matter altogether. It is then unsurprising to note that a large share of the 55 others are near-obsolete today. They eat less than 5% of the desktop operating systems pie. The hardware for which they were intended have aged like fine wine.

Now, let’s start by paying homage to the sizzling 40s.

Starter | Plugboards | 1st Gen Computing

IBM served the very first electronic computers in 1939. They functioned as simple numerical calculators crunching trigonometry and logarithm tables. They lacked operating systems however. This is unimaginably horrifying today: programming was done in absolute machine language by wiring up plugboards into these dinosaurs. Programs simply weren’t portable due to a lack of one-size-fits-all-hardware programming language compilers during this epoch. The programmers had to wait agonising hours hoping the vacuum tubes forming the circuitry wouldn’t sizzle out like their willpower.

Soup Selection | Punched Cards | 2nd Gen Computing

The 50s marked an era of punched cards and higher level programming languages. All scientific calculation programs were written by hand in assembler language or Fortran. Once punched onto cards, they were transferred on to human operators who collated them in batches onto magnetic tapes. Special programs – ancestors of today’s operating systems – in turn handled manually-loaded programs one at a time.

Such organised and faster computations were paramount since a single mainframe cost roughly £60,000.

Main Course | Multiprogramming | 3rd Gen Computing

Two seminal concepts were popularised in the 70s: multiprogramming and utility computing. All programmers were longing to hog the system, vying for the operators’ attention.

The former aimed to minimise CPU idling while tape-reading, as this took up 80% of the total time. This involved memory partitioning such that multiple jobs could concurrently utilise the CPU. Later advances called time-sharing were introduced, allowing multiple users to share the system resources via online means.

The latter never quite took off. In 1969, MIT, Bell Labs and General Electric got together and released MULTICS (MULTiplexed Information and Computing Service). Developers envisioned according computing power to everyone in the Boston area. Alas, this was a little too ambitious for its time. Not least marred by the fact that the processing speed barely reached a thousandth of today’s laptop capacity.

That being said, utility computing later resurfaced through the likes of IBM. Tech giants of the 80s lent computing power and database storage by the hour to large institutions such as banks.

Dessert | PCs | 4th Gen Computing

We enter the present: personal computers run operating systems that are machine-independent by design. (Assuming the computer instruction set architecture is based on either ARM or x86, which comprise 99% of the CPU market.) All thanks to the works of disgruntled Bell Labs scientists who had worked on MULTICS.

A pun on its failed predecessor, they launched their spin-off called UNIX (Uniplexed Information and Computing Service). Its tangled history deserves a spicy post of its own. I highly recommend this dubiously hilarious anecdote featuring Unirexia Nervosa to consume more in greater detail.

The focus of such research was on modular system design. C was born at Bell Labs specifically for development on the PDP-11 minicomputers running UNIX. Its success as a programming language is attributed to its flexible compiler which lends itself well to portability. The Bell System Technical Journal states that “the operating systems of the target machines were as great an obstacle to portability as their hardware architecture“. Consequently, UNIX was rewritten in C, thereby creating the first ever portable operating systems.

Cheese Selection | Virtual Machines | 5th Gen Computing

The 90s handed us viable virtualisation technology: multiple self-contained operating systems can run on the same piece of hardware. It draws upon time-sharing capabilities facilitated by improvements in multiprocessing since the 3rd Generation.

Virtual operating systems shine during server deployment. System administrators can upgrade services, safe in the knowledge any software dependency trouble is isolated to that virtual machine. When the server malfunctions, configuration back-ups can be quickly restored to their previous states via snapshots, thus saving boot time. Imagine reinstalling every single software package all over again! And sometimes, certain (usually legacy) applications can only run on a limited subset of operating systems. Usually because it is specially-built for industrial purpose or its publisher filed for bankruptcy long ago.

The advent of affordable “smaller-than-mainframes-but-bigger-than-PCs” minicomputers had long quashed the business model of utility computing by the mid-90s. But starting in 2006, the rise of cloud computing like Amazon’s EC2 will revitalise this practice via virtual machines.

Bill Please | Operating Systems Research

Miniaturisation of electronics components made possible the development of desktop workstations with capabilities exceeding the ubiquitous monstrosities that filled up entire halls some 30-odd years ago.

The roots of UNIX utterly astound me, and I haven’t done it justice in this taster course. Nowadays, many students would unknowingly take plug-and-chug USB booting (i.e portability of operating systems) for granted. Let’s not forget the remarkable innovations by a handful of developers at Bell Labs that changed the history of computing.

Check out the USENIX conference proceedings to discover cutting-edge development in operating systems. They are ceaselessly evolving in tandem with advancement in embedded smart technology. It has been widely predicted that the 6th Gen will be AI-driven, and that’s a frighteningly fabulous thought.

Stay tuned next week for tales of sleazy deals that have tailed breakthroughs in the history of operating systems. Presenting dossiers on Steve Jobs, Gary Kildall, and their archnemesis, Bill Gates.